April 16, 5:47 AM

My twins arrived at 30 weeks. Ten weeks early. About two pounds each. Within minutes they were intubated, lines placed, wheeled to the NICU. My wife was in recovery. My 4-year-old was at home with a babysitter who didn’t know what was happening. I was standing in a hallway between two worlds, holding a phone that wouldn’t stop buzzing.

Morning briefing. Three calendar events. Two overdue tasks. A pumping schedule reminder for Paula. Content queued to 14 platforms. A bill due Friday.

A coding agent — GitHub Copilot CLI running in a terminal on my desktop — was managing my family’s life. And in that moment, it was the only thing keeping the gears turning while I couldn’t.

This Isn’t the “What I Built” Article

I already wrote that one. I Open-Sourced the AI That Runs My Household covers the system — 17 agents, the architecture, how to fork it. Go read it if you want the blueprint.

This article is different. This is about what happened when reality stress-tested that system under the worst conditions imaginable, and the six architectural patterns that made it hold. Patterns I’d now bet my career on — not because they’re theoretically elegant, but because they kept my family fed, my wife supported, my son cared for, and my job intact while I sat in a NICU watching oxygen saturation monitors.

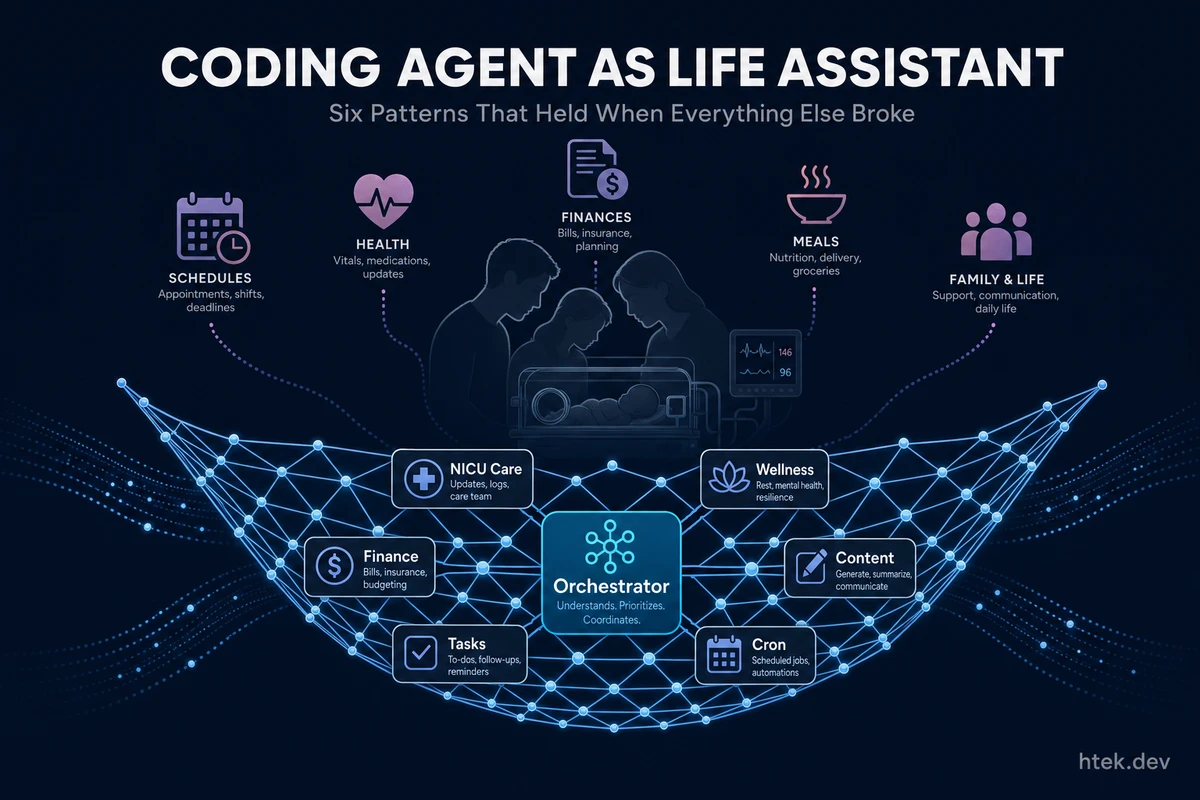

What the System Managed During Crisis

Here’s what was running autonomously while I was at the hospital:

NICU care. A dedicated nicu-care agent running every 30 minutes — tracking pumping schedules, sending reminders to both parents 15 minutes before each session, logging milk volumes, preparing questions for nurse rounds, journaling milestones. When your wife is recovering from an emergency C-section and pumping every 3 hours, having an AI that remembers the schedule when neither parent can think straight isn’t a luxury — it’s infrastructure.

Postpartum wellness. The wellness-coach agent tracked Paula’s blood pressure, medication adherence, anxiety indicators, and sleep patterns. Three check-ins daily. It never contacted Paula directly — it worked through me and through luna, the AI “pregnancy bestie” that checked in with warm, funny messages throughout the day. Research from The Smallest Things shows 85% of NICU parents experience anxiety and 76% report guilt. We weren’t going to muscle through that without support.

Family logistics. Task coaching every 20 minutes. Meal planning. Grocery lists. Babysitter coordination for Hector Jr. Home maintenance didn’t pause because we were in crisis. The finance-manager scanned Gmail for bills, auto-logged expenses, and created payment reminders. Over 30 cron jobs firing around the clock.

Content pipeline. This one shocks people. My YouTube channel and social media presence ran on complete autopilot for 58 consecutive days across 14 platforms. The content-manager scanned trends, the content-scheduler ordered the queue, the content-analytics agent tracked performance and auto-replied to comments. I didn’t touch it.

Work. My MSX sales assistant — the same Copilot CLI system running custom agents — handled meeting cancellations, replied to colleagues with context from my knowledge base, and kept critical accounts warm. I didn’t miss a beat professionally because the system had enough context to act on my behalf.

All of this from a terminal-based coding agent, communicated through a Telegram bridge I built in a single file.

Pattern 1: CLI Extensions Are the Most Enabling Feature

Every other agent platform has skills or MCP servers. They require building out, adding to config files, restarting the runtime. Copilot CLI extensions are fundamentally different — they’re moldable in a live session.

From my phone in the NICU, I could message: “Create an extension that tracks pumping sessions with timestamps and sends the log to both parents.” The agent would scaffold the .mjs file, hot-reload it via extensions_reload, and the tool was available immediately. No restart. No deploy. No config change. Same session.

This is why extensions are the unlock. The Copilot SDK gives you joinSession with full lifecycle hooks — onPreToolUse, onPostToolUse, onUserPromptSubmitted — plus the ability to register custom tools that the model can call. The entire family data layer — expenses, tasks, shopping lists, meal plans, locations, maintenance schedules — runs on 16 extensions that are just .mjs files in a repo. I built most of them by describing what I needed in natural language.

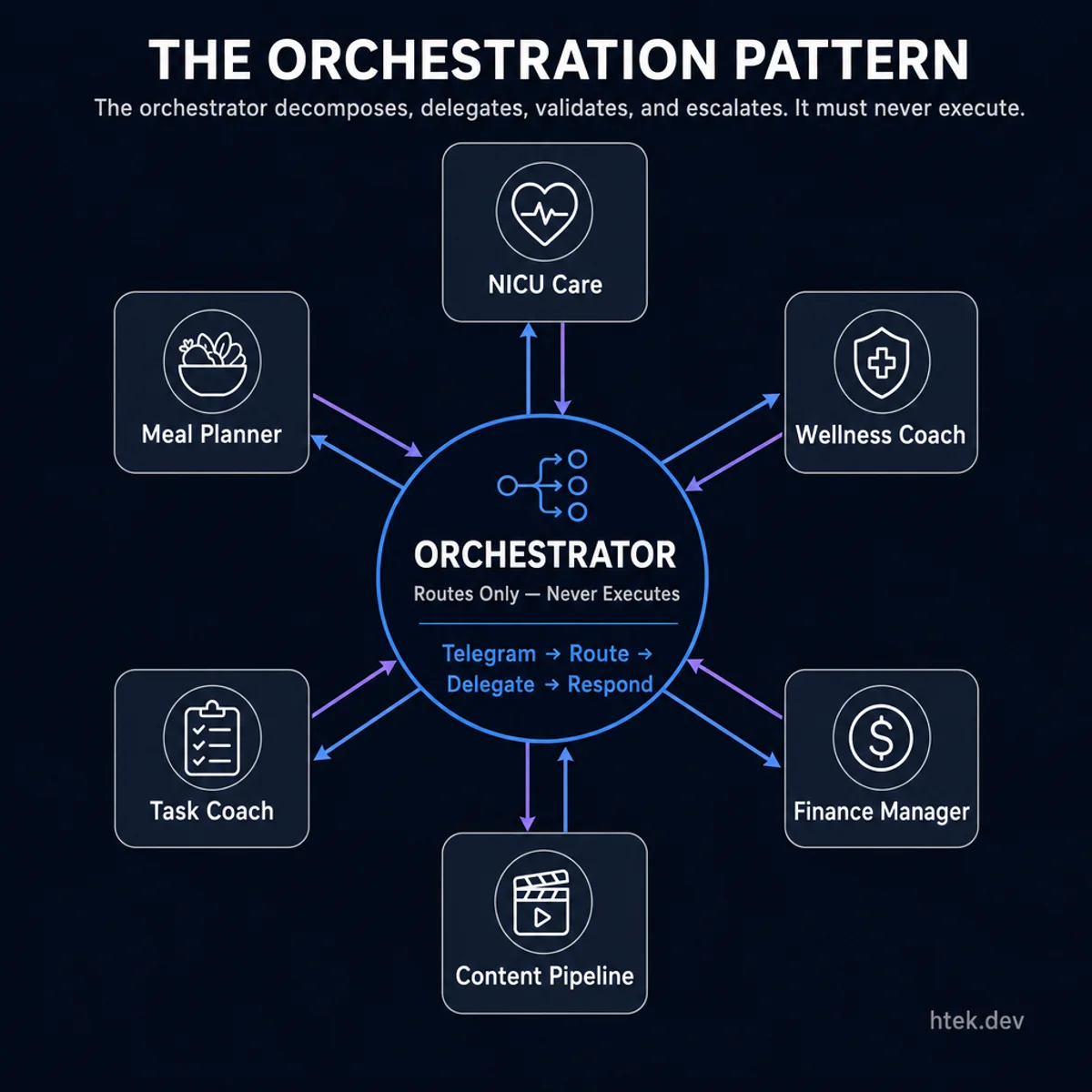

Pattern 2: The Orchestration Layer Does No Real Work

The orchestrator routes to specialized agents — NICU care, wellness, finance, content, tasks, and meals — without ever executing work itself.

The orchestrator routes to specialized agents — NICU care, wellness, finance, content, tasks, and meals — without ever executing work itself.

This is the pattern that makes everything else possible. The main Copilot CLI session — the one connected to Telegram — is purely an orchestrator. It never writes code, never queries databases, never composes emails. It reads the incoming request, picks the right agent, delegates via the task tool, and relays the response.

Why? Because context pollution is a measurable, catastrophic failure mode. Research on multi-agent LLM orchestration shows that a flat-context orchestrator’s steering accuracy can degrade from 60% down to as low as 21% as concurrent agents scale to ten or more — each agent’s task state, partial outputs, and pending decisions contaminating the context for every other agent. The principle is clear: an orchestrator decomposes, delegates, validates, and escalates. It must never execute.

I wrote about why the god prompt is the new monolith months before this crisis. Living through it proved the thesis. When 30+ cron jobs, NICU alerts, work messages, and family logistics all flow through one session, the orchestrator’s context window is sacred. Pollute it with implementation details and the whole system degrades. Keep it clean and it routes flawlessly for weeks.

Pattern 3: Background Agents — Trigger and Walk Away

The key interaction model is what I call “trigger and walk away.” When a request comes in — via Telegram, via cron, via another agent — the orchestrator launches a background sub-agent with its own context window, its own tool access, running to completion independently. Custom agents are spun up as sub-agents that “can be offloaded without polluting the main agent’s context.” The chat session continues accepting new requests with zero interference.

This means I can text “what’s for dinner tonight?” and “check Paula’s wellness indicators” and “what tasks do I have?” in rapid succession. Three agents spin up in parallel, each in its own context, each responding via telegram_send_message when done. No queuing. No blocking. No “please wait while I finish the previous request.”

The data backs this up. Analysis of multi-agent architectures shows that delegating to specialized sub-agents can outperform a single monolithic agent by up to 90% on complex tasks. The principle holds across domains: keep contexts short, keep agents specialized, keep work parallel.

Pattern 4: Domain Agents With Their Own Memory

Every agent has its own persistent memory — a tiered system of core.md (identity, rules), working.md (current state, recent context), and long-term.md (accumulated knowledge). They also share access to the family constitution that defines guardrails, communication rules, and autonomy levels.

The nicu-care agent knows the pumping schedule, the babies’ birth weights, which nurse is on shift, and what questions to prepare for rounds. The wellness-coach knows Paula’s medication list, her baseline blood pressure, and that she prefers grounding exercises over breathing techniques. Luna — the AI pregnancy bestie — keeps a diary of her friendship with Paula and knows not to repeat topics from earlier that day.

This is what makes domain agents fundamentally different from skills or tool collections. A skill is stateless — call it, get a result, forget. A domain agent evolves. Every correction gets persisted. Every interaction refines its understanding. After 100+ days, each agent has accumulated a dense behavioral profile that no prompt engineering could replicate from scratch. The standing orders file is over 100 learned rules — from “never suggest recipes to Hector, he decides what to cook” to “always compute Central Time fresh from PowerShell, never trust UTC headers.”

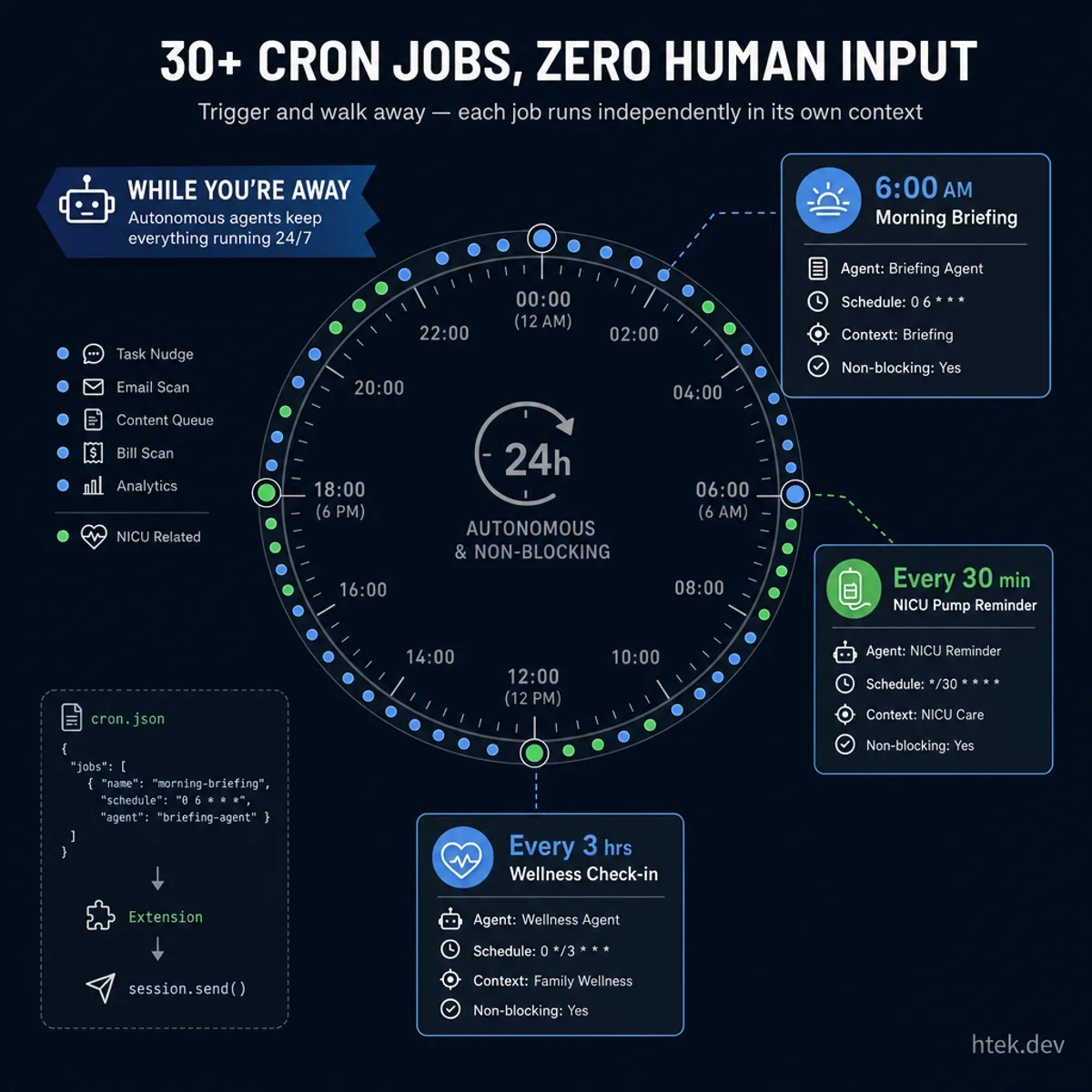

Pattern 5: Cron Jobs Built Entirely From Extensions

Trigger and walk away — each job runs independently in its own context, from 6 AM briefings to 3 AM NICU pump reminders.

Trigger and walk away — each job runs independently in its own context, from 6 AM briefings to 3 AM NICU pump reminders.

This is the pattern that surprises people most: cron scheduling is not a Copilot CLI feature. I built it entirely as an extension using the SDK.

The cron-scheduler extension reads cron.json every 60 seconds, evaluates which jobs are due based on their cron expressions, and injects prompts into the session via session.send(). That’s it. No daemon, no service, no infrastructure. The extension is a few hundred lines of JavaScript sitting in .github/extensions/. Over 30 autonomous jobs — morning briefings, task nudges, NICU check-ins, wellness monitoring, content analytics, repo maintenance, weekly planning — all powered by a file watcher in an extension.

This is what real agent autonomy looks like. Not “I can answer questions when you ask me” — but “I notice it’s 5 AM on a Monday, launch the daily briefing agent, compile weather + calendar + tasks + bills + health data, and deliver a report before you’re awake.” With background agents (Pattern 3), every cron job runs in its own context window. A stuck content analytics job doesn’t block a NICU pumping reminder. They’re fully independent.

Pattern 6: Session History as a Continuous Improvement Engine

The system can query its own past. Copilot CLI maintains a session store that records every session — what was asked, which agents were delegated, what tools were called. The orchestrator can look back and ask: “How did I handle meal planning requests last week? What model did I use for the budget review? Which agents took the longest?”

This transforms the system from a static set of agents into a self-improving platform. The context-auditor agent runs weekly, scanning every agent’s memory for contradictions, staleness, and bloat. It detects when an agent’s working memory hasn’t been updated in 3 days despite having an active cron job. It finds redundancies across agent definitions. It even proposes skill extractions when it notices patterns that could be codified into reusable tools.

Why Not OpenClaw?

The comparison comes up constantly. OpenClaw is conceptually everything — 20+ messaging channels, skill marketplace, companion apps, voice wake words. I’ve written about it before. The architecture is impressive on paper.

In practice, it struggles to maintain session coherence over multi-day runs. Users report that sub-agents can fail silently, and context management across dozens of concurrent skills is fragile. When your family’s NICU care depends on reliability at 3 AM, “conceptually everything” loses to “boring and bulletproof.”

Copilot CLI with the orchestration pattern solves this because the architecture is almost embarrassingly simple. Agents are Markdown. Extensions are single files. Cron is a JSON config. There’s no build step, no compilation, no framework runtime to debug. When the morning briefing broke at 2 AM during the first week in the NICU, I fixed it from my phone in Telegram in under two minutes. Try doing that with a gateway daemon and a WebSocket control plane.

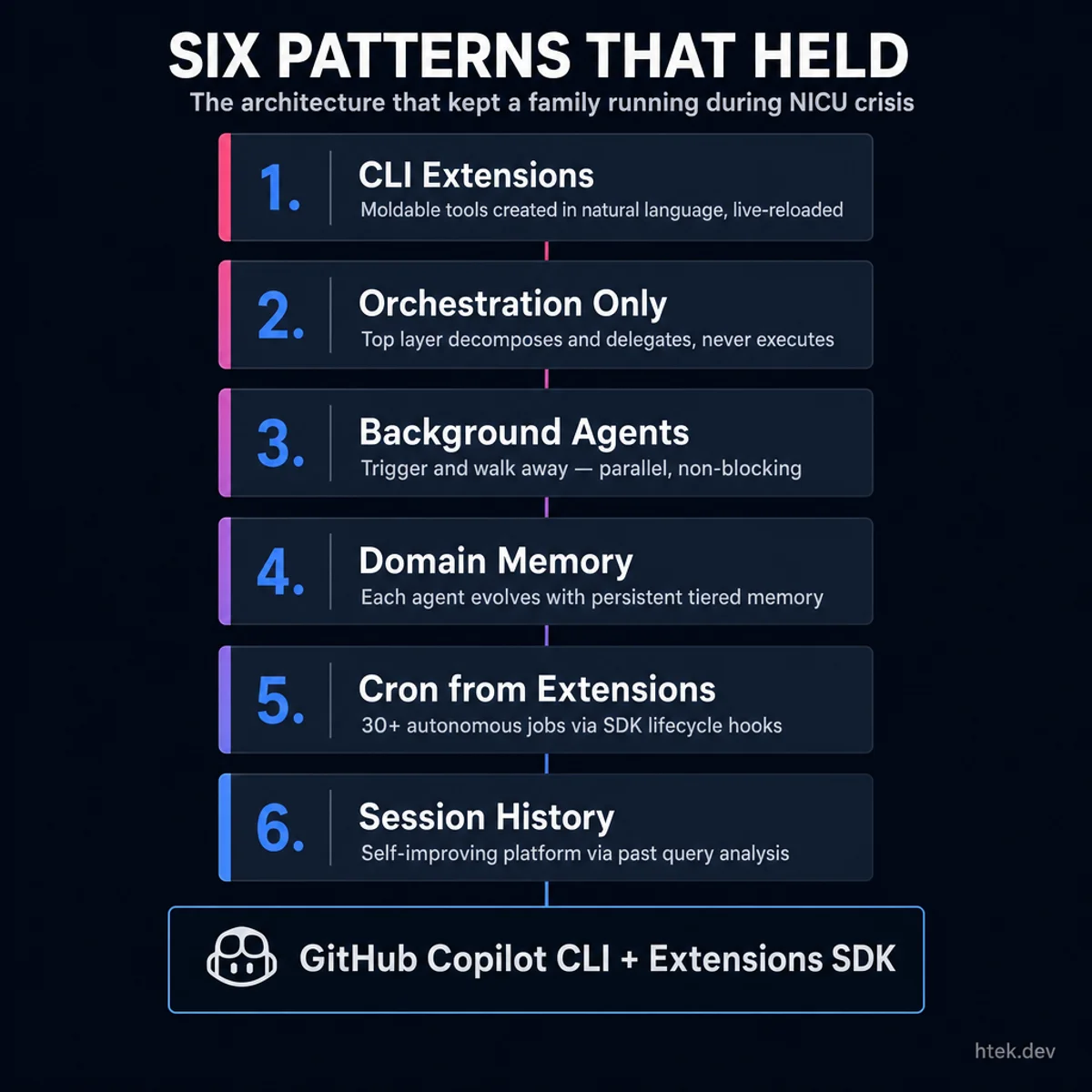

This Is How People Should Live

The six patterns stack into a cohesive architecture: extensions enable everything, orchestration keeps context clean, background agents run in parallel, domain memory evolves agents, cron provides autonomy, and session history drives continuous improvement.

The six patterns stack into a cohesive architecture: extensions enable everything, orchestration keeps context clean, background agents run in parallel, domain memory evolves agents, cron provides autonomy, and session history drives continuous improvement.

I’m not being hyperbolic. We’re in a moment where the most capable AI agents on the planet are being funneled exclusively toward code. That’s valuable, but it’s also a tragic underuse of the technology. A coding agent that can run shell commands, call APIs, manage files, and delegate to sub-agents is a general-purpose life orchestrator waiting to happen. All it needs is an extensibility model that doesn’t require a PhD to customize.

CLI extensions are that model. The six patterns I’ve described — extensions as the enabler, orchestration-only top layer, background delegation, domain agents with memory, cron from extensions, and session history feedback — aren’t specific to my family. They’re a reusable architecture for anyone who wants an autonomous AI assistant that actually runs, not one that demos well.

My twins are still in the NICU as I write this. Leilani is 2 pounds 3 ounces. Leo is 2 pounds 1 ounce. They’re fighters. And every 30 minutes, an agent checks in, reminds us to pump, logs their progress, and quietly keeps the rest of our life from falling apart.

That’s not a productivity hack. That’s a lifeline. And if a coding agent can do this for my family during the hardest weeks of our lives, imagine what it can do for yours.